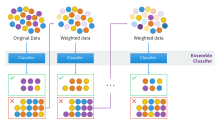

Boosting (machine learning)

|

Read other articles:

Vista aérea de la Plaza, en 2017. La plaza de la República es uno de los grandes espacios abiertos de la Ciudad de México en donde se llevan a cabo grandes eventos culturales, artísticos, políticos y civiles. El enorme espacio se ubica al Poniente de los límites del Centro Histórico de la Ciudad de México, localizado dentro de la demarcación que corresponde a la Colonia Tabacalera, en la Alcaldía Cuauhtémoc. Su origen y traza original de grandes dimensiones corresponde al solar que...

Pertempuran Suomussalmi adalah pertempuran antara pasukan Finlandia dan Uni Soviet yang menjadi bagian dari Perang Musim Dingin. Pertempuran ini berlangsung dari 7 Desember 1939 sampai dengan 8 Januari 1940. Finlandia memenangkan pertempuran ini melawan pasukan superior. Suomussalmi dianggap sebagai kemenangan Finlandia paling jelas, paling penting, dan paling signifikan di bagian utara Finlandia.[1] Di Finlandia, pertempuran ini masih dilihat sebagai simbol dari keseluruhan Perang Mu...

Mesjid Istana Tojo yang berada di Taliboi, Tojo, Sulawesi Tengah Kerajaan Tojo1770–1951Ibu kotaTaliboi[1]Bahasa yang umum digunakanBare'e (resmi), Indonesia (Suku Bare'e sebagai suku asli di kerajaan Tojo tidak memiliki sistem tulisan, tetapi sejak adanya kerajaan Tojo tahun 1770, Suku Bare'e memakai alfabet Latin (resmi), aksara Lontara, dan abjad Arab)Agama Islam, dan LamoaPemerintahanKerajaanRaja, Mokole Wea (raja perempuan), Jena (kata ganti raja) Sejarah • ...

Simone de BeauvoirSimone de BeauvoirLahir(1908-01-09)9 Januari 1908Paris, PrancisMeninggal14 April 1986(1986-04-14) (umur 78)Paris, PrancisEraFilsafat abad ke-20KawasanFilsafat BaratAliranEksistensialismeFeminisme PrancisMarxisme BaratMinat utamaFilsafat politik, feminisme, etika, fenomenologi eksistensialGagasan pentingEtika ambiguitas, etika feminis, feminisme eksistensial Dipengaruhi Descartes, Wollstonecraft, Kant, Hegel, Husserl, Kierkegaard, Heidegger, Marx, Nietzsche, S...

Public university in Riverside, California This article contains academic boosterism which primarily serves to praise or promote the subject and may be a sign of a conflict of interest. Please improve this article by removing peacock terms, weasel words, and other promotional material. (July 2023) (Learn how and when to remove this template message) University of California, RiversideMottoFiat lux (Latin)Motto in EnglishLet there be lightTypePublic land-grant research universityEstablish...

Nama ini menggunakan cara penamaan Spanyol: nama keluarga pertama atau paternalnya adalah Baldé dan nama keluarga kedua atau maternalnya adalah Diao. Keita Baldé Baldé pada tahun 2022Informasi pribadiNama lengkap Keita Baldé DiaoTanggal lahir 8 Maret 1995 (umur 29)Tempat lahir Arbúcies, SpanyolTinggi 1,81 m (5 ft 11+1⁄2 in)Posisi bermain PenyerangInformasi klubKlub saat ini Espanyol(pinjaman dari Spartak Moskow)Nomor 9Karier junior2004–2011 Barcelona2010�...

Ne doit pas être confondu avec Seyssel. Seyssuel Château des Archevêques de Seyssuel. Administration Pays France Région Auvergne-Rhône-Alpes Département Isère Arrondissement Vienne Intercommunalité Vienne Condrieu Agglomération Maire Mandat Frédéric Belmonte 2020-2026 Code postal 38200 Code commune 38487 Démographie Gentilé Seyssuelois(es) Populationmunicipale 2 080 hab. (2021 ) Densité 213 hab./km2 Géographie Coordonnées 45° 33′ 39″ nord, 4...

Location of Alleghany County in Virginia This is a list of the National Register of Historic Places listings in Alleghany County, Virginia. This is intended to be a complete list of the properties and districts on the National Register of Historic Places in Alleghany County, Virginia, United States. The locations of National Register properties and districts for which the latitude and longitude coordinates are included below, may be seen in an online map.[1] There are 14 properties a...

Cheppes-la-PrairiecomuneCheppes-la-Prairie – Veduta LocalizzazioneStato Francia RegioneGrand Est Dipartimento Marna ArrondissementChâlons-en-Champagne CantoneChâlons-en-Champagne-3 TerritorioCoordinate48°50′N 4°28′E / 48.833333°N 4.466667°E48.833333; 4.466667 (Cheppes-la-Prairie)Coordinate: 48°50′N 4°28′E / 48.833333°N 4.466667°E48.833333; 4.466667 (Cheppes-la-Prairie) Superficie19,6 km² Abitanti184[1] (2009) Dens...

Black Mass - L'ultimo gangsterJohnny Depp nei panni di James Bulger in una scena del filmTitolo originaleBlack Mass Lingua originaleinglese Paese di produzioneStati Uniti d'America Anno2015 Durata122 min Rapporto2,35:1 Generebiografico, drammatico, gangster RegiaScott Cooper Soggettodal libro di Dick Lehr e Gerard O'Neill SceneggiaturaJez Butterworth, Mark Mallouk ProduttoreScott Cooper, John Lesher, Brian Oliver, Patrick McCormick, Tyler Thompson Produttore esecutivoBrett Ratner, Jam...

Федеральное агентство по делам Содружества Независимых Государств, соотечественников, проживающих за рубежом, и по международному гуманитарному сотрудничествусокращённо: Россотрудничество Общая информация Страна Россия Юрисдикция Россия Дата создания 6 сентября...

「俄亥俄」重定向至此。关于其他用法,请见「俄亥俄 (消歧义)」。 俄亥俄州 美國联邦州State of Ohio 州旗州徽綽號:七葉果之州地图中高亮部分为俄亥俄州坐标:38°27'N-41°58'N, 80°32'W-84°49'W国家 美國加入聯邦1803年3月1日,在1953年8月7日追溯頒定(第17个加入联邦)首府哥倫布(及最大城市)政府 • 州长(英语:List of Governors of {{{Name}}}]]) •&...

Storrington. Storrington adalah desa besar di distrik Horsham, Sussex Barat, Inggris, dan salah satu dari dua paroki sipil yang ada di Storrington and Sullington. Storrington terletak di sisi utara South Downs. Pada 2006, populasi desa ini sekitar 4.600 jiwa.[1] Terdapat satu jalan sebagai pusat perbelanjaan utama yang disebut High Street. Jalan A283 menghubungkan Storrington ke Steyning di timur dan Pulborough di barat. Referensi ^ Parish Population Projections Diarsipkan 2005-08-28 ...

烏克蘭總理Прем'єр-міністр України烏克蘭國徽現任杰尼斯·什米加尔自2020年3月4日任命者烏克蘭總統任期總統任命首任維托爾德·福金设立1991年11月后继职位無网站www.kmu.gov.ua/control/en/(英文) 乌克兰 乌克兰政府与政治系列条目 宪法 政府 总统 弗拉基米尔·泽连斯基 總統辦公室 国家安全与国防事务委员会 总统代表(英语:Representatives of the President of Ukraine) 总...

عمارة مصرية قديمةمعلومات عامةالفترة الزمنية ق. 3100 BC-300 ADالبلد مصر والسودانتعديل - تعديل مصدري - تعديل ويكي بيانات العمارة في مصر القديمة هي الهندسة المعمارية المستخدمة في البناء والتشييد في نواحي عدة من تصاميم هندسية وأدوات وطرق مستخدمة في عملية البناء بمصر القديمة فقد أجم...

Belgian cyclist Igor DecraenePersonal informationBorn(1996-01-26)26 January 1996Waregem, BelgiumDied30 August 2014(2014-08-30) (aged 18)Zulte, BelgiumTeam informationDisciplineRoadRoleRiderRider typeTime trialistAmateur team2013–2014Tieltse Rennerclub Igor Decraene (26 January 1996, in Waregem – 30 August 2014, in Zulte) was a Belgian cyclist. In 2013, he became the UCI world junior men's time trial champion. The event took place on 24 September in Florence, Tuscany, Italy. In 2...

Football stadium in Shimizu-ku, Shizuoka, Japan IAI Stadium NihondairaI Sta'DairaFormer namesNihondaira Sports Stadium (1991–2009)Outsourcing Stadium Nihondaira (2009–2013)Location Shizuoka, Shizuoka, JapanCoordinates34°59′04″N 138°28′52″E / 34.98444°N 138.48111°E / 34.98444; 138.48111OwnerShizuoka CityOperatorShizuoka City Public Facility CorporationCapacity20,248Field size135 m × 73 mSurfaceGrassConstructionOpened1991Expanded1994TenantsShimizu S-Puls...

Pour les articles homonymes, voir Pasternak. Boris Pasternak Boris Pasternak en 1959. Données clés Nom de naissance Boris Leonidovitch Pasternak Naissance 10 février 1890 Moscou, Empire russe Décès 30 mai 1960 (à 70 ans) Peredelkino, Union soviétique Activité principale Poète, romancier, traducteur Distinctions Prix Nobel de littérature (1958) Auteur Langue d’écriture russe Œuvres principales Le Docteur Jivago modifier Boris Leonidovitch Pasternak (en russe : Бори...

Mexican business-class hotel brand Fiesta Inn logo A hotel in Ecatepec de Morelos Fiesta Inn is a Mexican business-class hotel brand.[1][2] It is owned by Grupo Posadas, Inc., which also owns other Mexican hotel brands, including the upscale Fiesta Americana and Fiesta Americana Grand, ultra-luxury Live Aqua, One, and the eco-tourist Explorean. There are 61 hotels operated under this brand throughout the country. Recognition Many Fiesta Inn hotels have received recognition for...

American politician (1923–2009) Warren HearnesHearnes in 196746th Governor of MissouriIn officeJanuary 11, 1965 – January 8, 1973LieutenantThomas EagletonWilliam S. MorrisPreceded byJohn M. DaltonSucceeded byKit BondChair of the National Governors AssociationIn officeAugust 9, 1970 – September 12, 1971Preceded byJohn Arthur LoveSucceeded byArch A. Moore Jr.31st Secretary of State of MissouriIn officeJanuary 9, 1961 – January 11, 1965GovernorJohn M. DaltonPre...